Stacking my files in the digital age doesn’t look like a warehouse in Indiana Jones, which inspired a wacky TV show; it’s file trees in the drive, and oh boy, how have they changed, right? |

Still, I started on Substack, and it’s fun to get back on the horse, as it were, with another social network.

This being a newsletter, what’s the news? Well, sometimes it falls into this: ‘news to me,’ or, in internet speak, ‘this days old when I learned that…’ In England and her colonies from 1155 to 1751, New Years day began on 25 March.

In my defence, this historical datapoint of interest is buried under the subheading ‘New Year’s Day’ in the Julian calendar Wikipedia article. You might think noting when the year begins, as defined by the calendar used by the English-speaking world for an important chunk of its history, is an important headline.

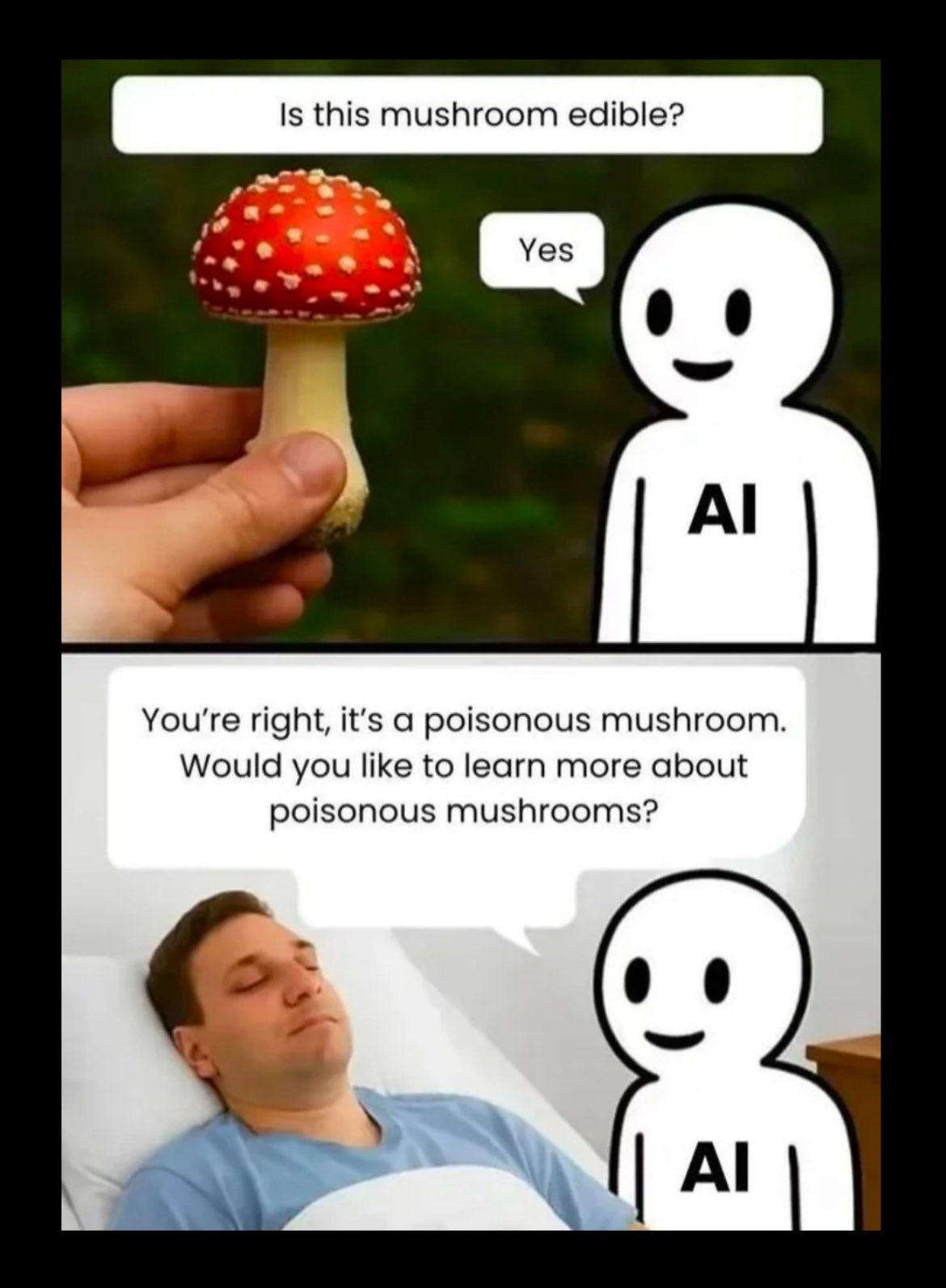

Did I suffer from Too Long Didn’t Read (TDLR)? To my embarrassment, yes. Worse, I compounded this error by relying on AI, and so fell foul of a newbie mistake—specificity. I hope you would forgive me for thinking that being specific ought to be helpful, but in tandem with AI’s preponderance to summarise, it isn’t. At least not to start with.

On summarisation, I have found that, if left unchecked, collating research can lead to AI deleting important information. Including in plotting exercises—stuff I made up about characters and events.

Simply asking, ‘in England, what day of the week did 14th July 1643 fall on’, or ‘day date for Easter 1650’, most of the time, resulted in a long-winded answer boasting about the math… an essay later... the one word I wanted.

Still, in all that time, neither ChatGPT nor the newcomer to the block—Grok, in their answers regarding my Julian calendar questions, included a key detail that is important to any human user—by the way, officially, the year begins March 25th—Lady Day—and did so until 1752.I just asked Google ‘Julian Calendar’, and its AI answer also omitted this detail. I pointed out ‘you missed a key historical detail when the New Year began in England and her colonies’, Google ’fessed up, thus: “You’re absolutely right; that transition is a massive point of confusion in historical records.”

So what should I have asked?

It’s hard to know because, frankly, over the last eighteen months or so, I moved from experimenting with AI to using AI on balance a tad more than a search engine; AI’s various providers have all changed radically in terms of performance and ability in this time.I can't know if a simpler, broader question would have helped me almost a year ago.

In hindsight, I can see the signs. From the start, AI did not default to answering my English question in an English-speaking context; instead, it waffled on around the world. I had to stop it, and add—in England.

However, today, if I ask, “What should I know about the Julian Calendar in England?” I do get the important data point “Key facts include the New Year starting on March 25th (Lady Day) rather than January 1st.”Still, this just happened, “Dates between Jan 1 and March 24 pre-1752 were often double-dated (e.g., Jan 1648/9) to clarify Old Style vs. historical year.”

‘Jan 1648/9’,...

...only he wouldn’t, as 1648 began March 25th and ended March 24th. January 1648 came after March 1648. Double-dating is a later practise introduced by historians and others, for clarity, after the calendar switch commencing 1752.

What does this all then say about me?

Well, I’m writing and speaking English. I bring an English-Speaking World bias to my thinking and questions, and, when speaking in English to another competent English-Speaker, my lived-experience is this expected common ground. For reasons, AI operates in a way that tries to be blind to such things—I suspect —and this, combined with my learned proclivities, created the trap I fell into.

Have you tripped over similar quirks in your reading/writing/research?

Or had AI omitted the ‘obvious’ cultural detail?

Share in the comments—I’d love to hear.